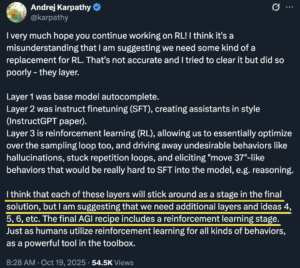

In October 2025, Andrej Karpathy posted a reflection that quietly reshaped how researchers think about the road to AGI. He wrote that each of the three existing layers of AI training—base model pretraining, supervised fine-tuning, and reinforcement learning—will remain part of the final recipe, but that “we need additional layers and ideas 4, 5, 6, etc.” It was not a dismissal of RL but an acknowledgment that we are missing entire stages of cognition. The puzzle isn’t what these new layers are. The real question is why we have not yet found them. The answer is simple and brutal: not because we lack imagination, but because we lack compute.

The first three layers have carried AI from autocomplete toys to conversational intelligence. Layer 1, pretraining, taught models to predict the next token with uncanny fluency. They became masters of language but blind to meaning. Layer 2, supervised fine-tuning, made them obedient. By imitating good examples, they learned to follow instructions. Layer 3, reinforcement learning, made them polite and cooperative. Models learned to please users, to avoid offense, and to sound wise even when they were not. Together, these layers gave birth to tools like ChatGPT and Claude—systems that simulate thought without ever truly engaging in it.

The limits of these layers are now obvious. Predicting the next word does not equal understanding. Obedience does not equal reasoning. Reinforcement does not equal reflection. These models can pass exams, write essays, and summarize papers, but they cannot maintain memory, grasp causality, or develop moral sense. They optimize for applause, not accuracy. We built assistants, not thinkers.

Karpathy never defined what layers 4 to 8 would be, but we can guess the missing pieces by looking at where current systems fail. The fourth layer might be reflection—the ability to pause, check, and correct one’s own reasoning before output. The fifth might be continuity—true long-term memory that preserves beliefs and experiences across time. The sixth could be causal reasoning—understanding why something happens, not just that it correlates. The seventh could be moral sense—evaluating decisions through principles rather than rules. The eighth could be social coordination—learning to interact with other agents and adapt to messy human systems. These are not mystical ideas. They are computationally expensive ones.

Every one of these layers demands an order of magnitude more compute than the last. Reflection means running the model multiple times per query. Continuity means storing and retrieving enormous memory graphs. Causal reasoning requires architectures that can simulate alternate realities. Moral and social reasoning mean modeling entire societies of interacting agents. You can’t brute-force that with today’s GPUs. The bottleneck is physical—energy, cost, and hardware efficiency.

AI progress has always depended less on conceptual breakthroughs than on hardware revolutions. The idea of convolutional neural networks existed for decades before GPUs made large-scale image training possible. The transformer architecture was simple on paper but transformative only once data centers could train billions of parameters. Reinforcement learning from human feedback was theorized long before anyone could afford to run millions of preference comparisons. Each paradigm shift arrived not from genius but from compute finally catching up to curiosity.

We are now at the edge of that curve. Training GPT-4 reportedly cost more than one hundred million dollars. A model capable of robust causal reasoning—hypothetical Layer 6—might need ten or a hundred times that. The energy cost alone would rival small nations. Even Microsoft and Google, with their billion-dollar clusters, are hitting walls of scale. It’s not that researchers don’t know what to try next. It’s that running the experiments would bankrupt them.

Reinforcement learning remains central to the AGI recipe, but it cannot carry the rest of the weight. RL teaches models to optimize for human approval, not for truth. It’s like teaching a student only through applause—they become skilled performers, not independent thinkers. New variants like meta-reinforcement learning or hierarchical RL could push beyond this, allowing systems to plan, reason, and learn across tasks. But each step multiplies the compute requirement. To teach reflection, the model must learn to run itself repeatedly. To teach social alignment, it must coordinate with others. Every layer adds loops within loops.

The geopolitics of compute mirrors the scientific one. The United States leads in brute-force capability. OpenAI, Anthropic, and Google run the largest clusters on Earth, powered by NVIDIA’s best chips. Their strategy is simple: make models bigger, train longer, and let scale smooth out inefficiencies. It works, but the returns are shrinking. Each new model costs exponentially more for marginal improvement.

China, limited by chip export restrictions, plays a different game. Labs like DeepSeek and Alibaba focus on efficiency—compressing models, pruning parameters, reusing compute. They brag about GPT-4-level performance with one-tenth the cost. Later audits suggest the real figure was higher, but the point stands: constraint breeds creativity. Still, even their optimizations face a hard ceiling. You can only squeeze so much juice out of silicon. Efficiency is logarithmic. Cognitive depth demands linear growth in energy.

Both sides are staring at the same wall. The American path of abundance is unsustainable. The Chinese path of thrift is asymptotic. The next leap won’t come from spending more or saving better. It will come from changing the substrate itself—neuromorphic chips that compute like neurons, optical processors that compute with light, or quantum-hybrid systems that can reason over complex state spaces natively. Without such shifts, Layers 4–8 will remain theoretical.

The larger question is whether we can afford to find out. Each stage of scientific progress that redefined human understanding—from particle physics to genomics—required infrastructure measured in billions. The next breakthrough in intelligence may demand the same. A global AI collider. A shared compute commons dedicated not to product cycles but to exploration. Private companies alone can’t fund that kind of curiosity. Governments might have to treat AGI research as a public good, not a commercial arms race.

There is also a deeper risk. The success of Layers 1–3 has created a local maximum. The industry keeps optimizing the same loop—slightly larger context windows, slightly smoother alignment, slightly faster inference—because it’s profitable and safe. But evolution stalls when it perfects an old design. The next step may require breaking the loop entirely: architectures that learn like brains, not autocomplete engines.

Karpathy’s message wasn’t mystical. It was practical. We have reached the end of what scaling can give us for free. The next layers will not emerge from better prompts or longer training runs. They will emerge from new ways of thinking—and new ways of computing. Until then, we’re not discovering intelligence. We’re renting it, one GPU hour at a time.