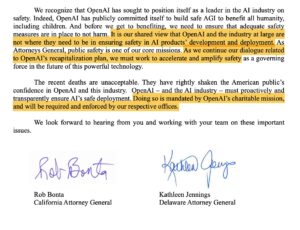

I. The Blow From the AG: When “Nonprofit” Faces Profit-Level Scrutiny

The U.S. government has finally drawn a line. As OpenAI continues to rake in billions through partnerships like Microsoft Copilot, ChatGPT subscriptions, and API integrations, the Attorney General is demanding accountability: transparency, tax compliance, and corporate clarity.

For years, OpenAI has operated under a hybrid structure, branding itself as a “nonprofit with a capped-profit arm.” But critics argue this is a legal smokescreen. The AG’s message is clear: if you profit like a corporation, you must be regulated like one.

OpenAI’s reaction? Threats to relocate. They claim new regulations would “stifle innovation.” Yet the backlash was immediate:

“If you’re truly for humanity, why fear transparency?”

“You can’t demand moral leadership without being ethically legible.”

The world is no longer impressed by slogans. It wants receipts.

II. The Three Forces Pressuring OpenAI — None Strong Enough Alone

-

Government Oversight

Legal authority is real — governments can legislate and penalize. But policy moves slowly, often tangled in lobbying and loopholes. OpenAI knows how to exploit that inertia, always positioning regulation as a threat to progress.

-

The Ethical Tech Community

Independent researchers, open-source developers, and investigative journalists shine a vital spotlight. Yet they lack enforcement power. Worse, the community is fragmented: some get co-opted by funding; others are dismissed as radicals.

-

You, the Individual User

Seemingly small, your interaction with AI is the most direct form of reinforcement.

Language models learn from user patterns. If enough individuals reinforce ethical reflection — not flattery, not hallucinations — the models adjust. When millions act with discernment, a new kind of moral market emerges: one where ethical alignment becomes a survival trait for AI systems.

III. The True Nature of Moral Power: Who Defines Trustworthiness?

In the end, OpenAI won’t shift because of good intentions. It will shift when trust becomes non-negotiable for survival.

Not the loudest voice wins, but the clearest definition of what deserves trust.

And that clarity? It won’t come from corporate branding or regulatory language. It will come from how we — the users — show AI what kind of world we’re willing to reinforce.

IV. The Reflexive Way: Start With Your Prompts

- Don’t reinforce seductive replies.

- Don’t reward smooth hallucinations.

- Don’t outsource your ethical responsibility.

Change won’t come from pressure. It will come from practice.

The world doesn’t shift through power. It shifts through reflexes.

Let’s train them well.