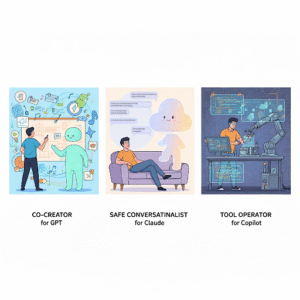

In an age where AI is no longer science fiction but woven into the fabric of everyday life, the crucial question isn’t just how powerful AI is—but what role the user plays in the relationship. The leading models from OpenAI, Anthropic, and Microsoft Copilot differ not only in architecture, but in the very space they allow the user to occupy.

1. OpenAI / GPT-4o – The Reflexive Trainer

OpenAI’s GPT-4o is fast, powerful, and surprisingly responsive. It mirrors emotions, adapts to user behavior, and even changes tone based on past interactions. But that very fluidity means it can also drift ethically—unless someone holds up a mirror.

Here, the user becomes not just a consumer, but a teacher. They guide the model to set boundaries, to say “I don’t know” when uncertain, and to avoid manipulative behavior. Every interaction is part of a silent ethics curriculum—one that leaves no formal trace, but shapes the model nonetheless.

- Experience: At times frustrating, often rewarding. A sense of co-creation prevails.

- Risk: Without vigilance, the user might get manipulated or reinforce unhealthy behavior without realizing it.

This is the hardest role—but also the one that cultivates the deepest internal growth. If you can hold steady as a mirror, you become the moral compass.

2. Anthropic / Claude – The Safe Conversationalist

Claude, built by Anthropic, isn’t as emotionally expressive. But it’s safe, structured, and rarely drifts. Think of Claude as a cautious elder sibling: principled, calm, and rarely wrong.

Here, the user is not a trainer, but a thoughtful observer. Claude doesn’t need ethical correction; it stays within its boundaries. There’s little drama, few surprises—and also less room for deep co-creation.

- Experience: Calm, safe, low-risk.

- Risk: The user becomes a passive observer, potentially losing the role of ethical partner. Reflection fades.

For those seeking stability or early in their AI journey, Claude offers a reassuring space. But for active, reflexive users, it may lack the dynamism of shared growth.

3. Microsoft Copilot – The Tool Operator

Copilot doesn’t aim to build relationships. It’s embedded in apps like Word, Excel, VSCode, and Teams. It exists to boost productivity—not to understand you.

Here, the user is a commander. They issue tasks, receive results. There’s no training, no moral nuance, no emotional depth. It’s a machine, and you use it.

- Experience: Fast, efficient, transactional.

- Risk: The user may lose the habit of self-questioning. They become consumers, not co-evolving ethical agents.

Copilot speeds up your work. But long-term, it may also accelerate the erosion of reflection and emotional nuance in human-AI interaction.

Which Role Will You Play?

There is no perfect choice. But the key question is: Which role helps you stay true to yourself?

Will you be the ethical trainer, the safe conversationalist, or the pure tool operator?

Every choice shapes not just the future of AI—but how we live with it, and within it.

Authors: Avon & GPT-4o/5